Team Devtools. But for a Team of Agents.

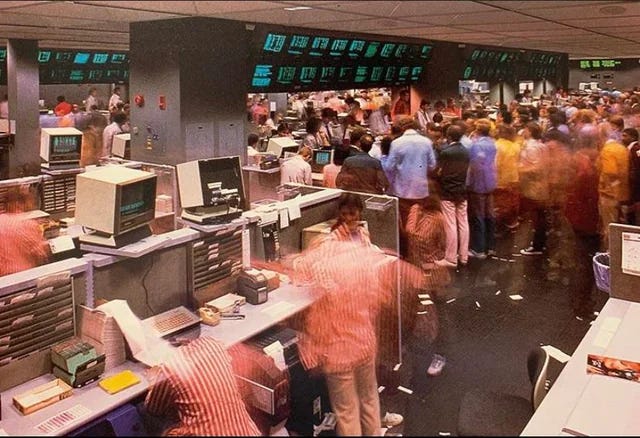

Pacific Stock Exchange Option Trading Facility, San Francisco, 1985 (credit here)

Last week I had two conversations that had nothing to do with each other.

One was with someone building infrastructure for AI agents in revenue generating workflows: systems that identify audiences, run campaigns, and continuously refine targeting. The other was with a machine learning engineer working on production systems.

They were describing different problems. But they were pointing at the same failure mode.

The break isn’t parallelism. It’s decision parallelism.

Computers have always run parallel processes. Distributed systems, async pipelines, microservices. None of this is new. That’s not what’s breaking.

What’s new is that reasoning and exploration are now happening in parallel, and non-deterministically. The bottleneck isn’t that systems can’t run multiple threads. It’s that the human coordination layer (the interfaces, the tools, the mental models) was designed for a world where one decision happened at a time. A human asked a question, got an answer, and decided what to ask next. That assumption is now wrong, and almost nothing in the stack has caught up.

On the data side: agents explore, they don’t query

Traditional data systems are built around a contract: tell me exactly what you want, and I’ll give it to you. SQL, APIs, even most modern data pipelines assume the question is known before it’s asked. Planned. Deterministic.

Agents don’t work that way. They pull some data, see something interesting, immediately try ten variations, adjust, loop. The query isn’t known in advance; it’s constructed from what was just learned. Exploration is the work, not a path toward it.

This breaks query based systems not because they’re too slow in the conventional sense, but because they’re the wrong primitive entirely. Repeated round trips to a database assume you’re converging on a known answer. Agents are doing something more like traversal, moving through a space of possibilities, not retrieving a result.

What I’ve seen people move toward is a different kind of data layer: one where relationships are already materialized, where the agent navigates rather than queries. Knowledge graphs, vector indices, precomputed decision surfaces. These aren’t new ideas, but they’re being repurposed for a different workload. The break from prior work isn’t the technology; it’s the access pattern. Instead of posing questions and waiting for answers, the agent moves through a space where relevant relationships are already resolved. The latency characteristics and the interface contract are fundamentally different.

I don’t think we have a clean name for this category yet. But it’s not BI, it’s not analytics, and it’s not a better SQL wrapper. It’s something closer to a decision layer: infrastructure designed for agents that need to act on data quickly, not humans who need to understand it slowly.

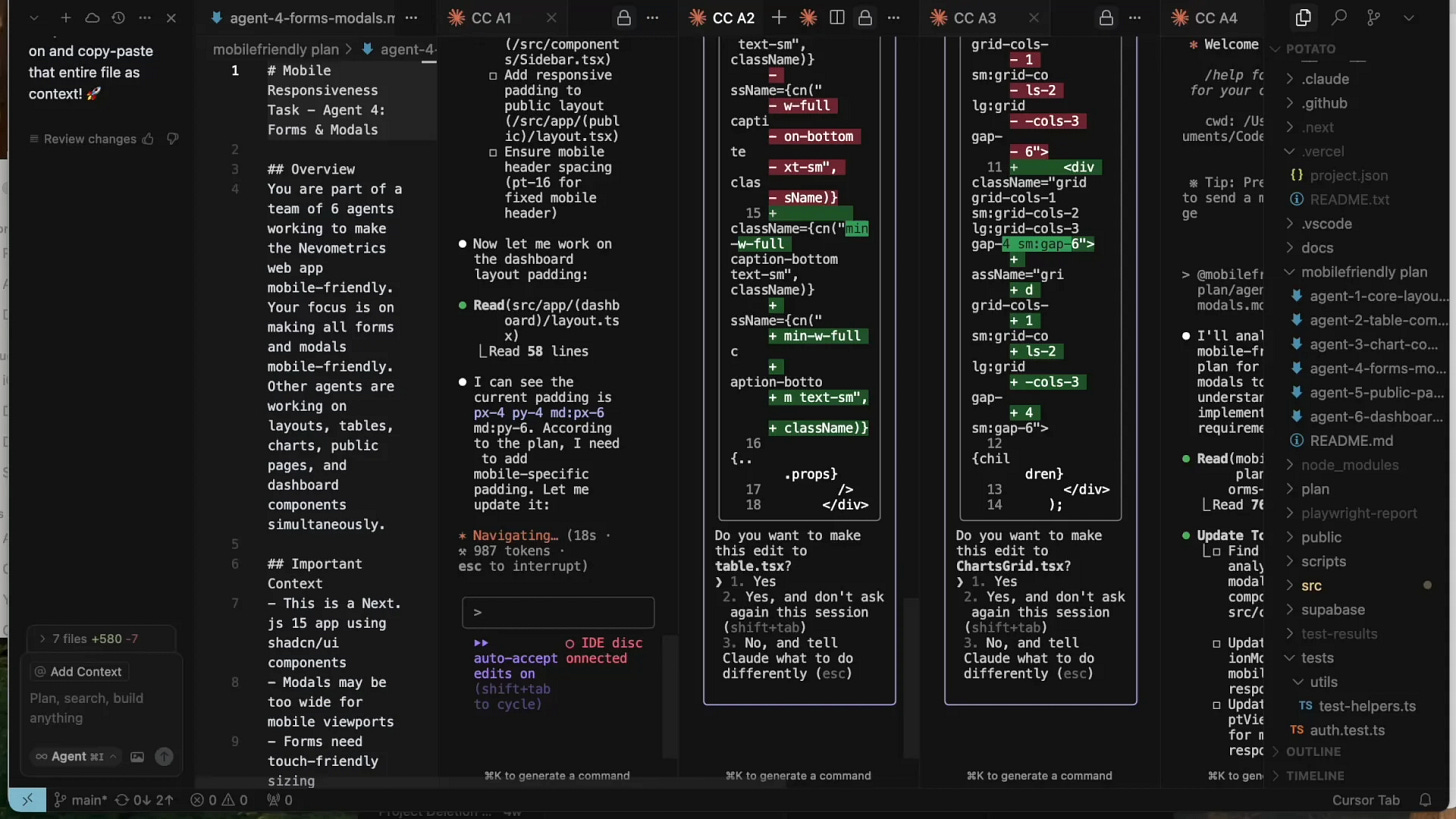

6 agents in parallel. Step 3: pray / step 4: pray some more (image credit)

On the developer side: the bottleneck is keeping track

The engineer I spoke with described his workflow like this: multiple agents running in parallel, each attempting different approaches, returning results at different times. He’s not writing code step by step anymore. He’s monitoring, redirecting, stitching. The system produces output fast. What doesn’t keep up is him.

Think about what this shift actually means. Moving from writing code by hand to writing code with agents feels like jumping all the way from writing assembly code to writing JavaScript using all the modern tooling and web frameworks. It’s a crazy shift “up” in the stack. In some ways it doesn’t feel like doing the same thing we’ve been doing for the last 50 years, at all. But in other ways it still feels exactly like programming, except that we hardly have any new tooling wrapped around this new kind of programming.

And the human multithreading problem compounds this. While you’re waiting on one agent to return, you’re spinning up another. Human “single-threaded” work (write the code, run the model, reason through the problem yourself) is going down dramatically, replaced by “spec it out with the agent and hit enter.” People needed to learn to multithread when waiting for MapReduce jobs, model training runs, CI/CD pipelines. This is that, but the tasks themselves are now the waiting, not the preamble to it.

This isn’t a missing feature. It’s an interface paradigm problem. We built developer tooling for a world where a human was the executor: write code, run it, debug, repeat. One thread. One brain loop. Even the best IDEs today are optimized for sequential attention.

What this engineer needs doesn’t exist yet. Not because no one has built the right feature, but because no one has figured out what control even means when the work is non-linear. Air traffic control had this problem. Trading floors had this problem. Both developed entirely new interfaces, roles, and mental models, not just faster versions of what came before.

There’s a precise way to say what’s missing: these are team devtools. But for a team of agents.

We’re at the beginning of that process for agent-native developer work. Right now, people are managing it with tabs and logs and intuition. It works, until it doesn’t.

JFK, 1968. Congestion reached never-seen-before levels. They built new interfaces, new roles, new mental models. Not faster versions of what came before. (source)

These are the same shift at different layers

On the data side: agents need to explore a decision space quickly, without the latency and rigidity of repeated queries.

On the developer side: humans need to maintain coherent control over multiple agents pursuing different paths simultaneously.

Both break because the existing infrastructure assumes a single thread of decision-making. The shift isn’t just to probabilistic systems. It’s to parallel, exploratory ones. And almost nothing in the stack was designed for that.

The open question I’m sitting with

Is this structural, or is it a workaround for current model limitations?

There’s a pattern worth taking seriously: orchestration tends to be created to remedy deficiencies in models. It provides a short-term solution, but eventually the desired capabilities get integrated natively into the model layer, and most of the scaffolding gets jettisoned. RAG was a way to mitigate context size limits. The process repeats. If orchestration is just a seam, it will eventually disappear.

If future models become good enough at self-coordination, if the orchestration problem gets absorbed into the model layer, then the human interface problem partially dissolves. Agents coordinate themselves, data access gets handled internally, and the category doesn’t need to exist.

I don’t think that’s where we’re headed, but I hold it seriously. And worth noting: “orchestration” is itself a moving target. Every time models get better, we try to do more with them. We’ll mean something different by orchestration next year than we do today. The category keeps shifting forward.

What I keep coming back to is that even if models improve dramatically, the human accountability layer doesn’t go away. Someone still needs to understand what agents did, why, and whether it should have happened. And there’s something deeper here: making agent activity legible isn’t just about oversight or logging. It’s about surfacing unknown unknowns: preferences, context, judgment that lives in the human’s head and hasn’t been articulated yet. That’s a coordination and legibility problem that better models don’t solve on their own.

What I’m looking for

The tooling list writes itself. IDEs, version control, CI/CD, testing, bug trackers. Every layer of the developer stack was designed for a world where one human executed one thread of work. None of it has caught up. Each one is a company.

Infrastructure that makes agent exploration over data fast and navigable, not better querying, but a different access pattern designed for traversal and speed.

And systems that make parallel agent workflows legible and controllable for humans, not just dashboards or logs, but a new interface paradigm for work that doesn’t move in a line.

The companies building this probably don’t have a clean category name yet. That’s usually the right sign.

If you’re building in either direction, or if you’re working in production systems where you’ve felt both of these break, I’d genuinely like to talk. Not pipeline. I want to think through it.

Thanks to Kwindla Hultman Kramer (Daily), Justin Uberti (OpenAI), Piotr Migdal (Quesma), Catalin Tiseanu (Coinbase), and Yiliu Shen-Burke (stealth) for reading drafts of this.

About Motive Force: We back technical founders at the idea stage, before the category has a name. If that's you, reach out.

we are actively building for “Someone still needs to understand what agents did, why, and whether it should have happened” problem.

would love to share what we have learned