Robotics Is Not About Robots

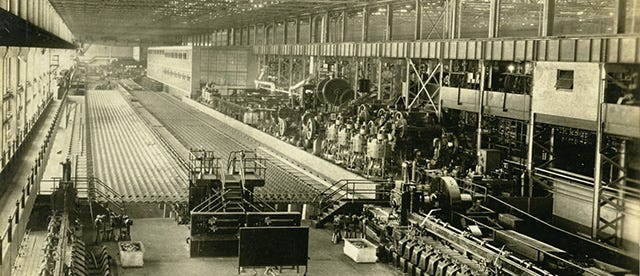

Henry Ford’s Rouge (source)

A robot running at 95% accuracy sounds impressive. In a factory running fifty cycles an hour, that means fifty failures every shift. Each one requires a human to intervene, log the incident, reset the system, and decide what happens next. The robot is working. The deployment isn’t.

This is the gap that ends most robotics companies. Not the hardware. Not the vision model. Not the manipulation benchmark. The system around the robot.

We have been spending time with founders and engineers working in physical AI, and the same failure mode keeps surfacing. The robotics conversation is almost entirely about the machine: better models, better form factors, better demos. The deployment conversation barely exists. And almost nothing in the stack was built for what comes after the demo.

Physical AI is not a robot problem. It’s a skills, workflows, and learning system problem.

The hierarchy nobody draws

At its core, a robot is just an embodiment: joints, sensors, actuators. The real abstraction sits above the machine.

Physical work can be structured as a hierarchy: scenarios decompose into skills, skills into tasks, tasks into trajectories. This defines how complex industrial processes become machine-executable actions. It is also, not coincidentally, how enterprise software has always modeled work. The systems we worked on at SAP were built on exactly this kind of decomposition. What’s different now is that AI can learn the levels of this hierarchy, not just execute against a fixed specification.

The primary challenge is therefore not the robot. It’s the toolchain that lets robots learn, operate, and improve: simulation environments, workflow models, perception and planning stacks, data pipelines, the software that converts human demonstrations into reusable skills. This is the infrastructure that lets automation move beyond isolated machines toward scalable systems.

Hardware is a data acquisition device

Here is the reframe that matters for thinking about where value accumulates.

Robots increasingly function as data acquisition devices. Each deployment generates operational telemetry and demonstrations that feed back into learning systems. Over time, those feedback loops produce skill libraries, workflow abstractions, and training datasets that let automation capabilities transfer across tasks, sites, and embodiments.

This is why many of the most interesting robotics founders are not primarily designing new machines. They are building learning systems that combine simulation, real-world telemetry, and human motion data to continuously improve performance across fleets. The compounding advantage is in the accumulation of operational data and the ability to convert it into reusable automation capabilities.

One idea we keep coming back to from several conversations: the intelligence doesn’t have to live inside the robot. One architecture inverts the assumption entirely: the brains are in the environment, and the robot is just a tool the physical model uses to act on the world. That inversion changes the cost structure, the upgrade path, and the distribution model. It also makes the robot itself much cheaper, which is the actual bottleneck for adoption in most markets.

The deployment gap is real, and it’s not about the model

The pattern is consistent: great demos, almost no real deployments. And when companies do deploy, they hit a different set of problems than they expected.

Integration with existing operations. Financing and asset lifecycle. Service networks. Change management. Human workflow alignment. These are not engineering problems. They are adoption problems, and almost nothing in the robotics stack was built to solve them.

The deployment stack is where most robotics companies die: not on the benchmark, but on the installation. Nobody can plug the robot into the existing ticketing system. Nobody has figured out how to triage a failure at 2 am. The operator doesn’t know when to trust it and when to override. And the vendor who sold the hardware has no infrastructure to help.

This is the tank problem. Germany built the most complex and complicated tanks in the second world war but lacked scale everywhere. The Soviet Army built the most tanks of all, but they were designed as disposable items. The United States built simpler tanks at scale with a massive supply chain and service network.

Robotics is still in the beautiful tank era. The market winners will build the whole logistics network.

Berlin, summer 2024. Research.

The agent runtime is the missing layer

There is something happening in the software stack that will define which robotics companies matter in the next few years, and it is barely being discussed.

The architecture of a capable physical AI system is, at its core, two things: the model and the agent runtime. The models are getting better and everyone is paying attention to that. The agent runtimes are almost invisible in the conversation, and yet the performance of the whole system is insanely dependent on them.

An agent runtime for physical AI has to do things that software has never had to do before. Operate continuously, not just on demand. Manage asynchronous input from the physical world. Reason about state across long time horizons. Coordinate between multiple agents pursuing different objectives in the same physical space. Log decisions in a way that’s legible to humans who need to understand what happened and why.

This is not a solved problem. What most people are building today are either model wrappers or task-specific automation. Neither is infrastructure for continuous physical operation. Neither was designed for a world where the agent is running, the robot is acting, and a human somewhere needs to understand and trust all of it.

The companies building the agent runtime layer for physical AI don’t have a clean category name yet. That’s usually the right sign.

Where value actually accrues

Selling a robot is a capex transaction. Scaling automation requires owning the workflow layer: the point where robotic skills integrate with customer operations, business processes, and human workflows.

That layer determines adoption. It determines utilization. It determines whether a company accumulates the operational data that makes the next deployment better than the last one. And it determines whether, when a customer’s processes change, the automation changes with them or breaks.

The companies that define this market won’t necessarily be those that build the most sophisticated machines. They will be those that develop the most effective learning and workflow systems around machines. The robot is the vessel. The system is the product.

What we’re looking for

The category map writes itself. Simulation and training infrastructure. Workflow modeling tools. Agent runtimes for continuous physical operation. Incident management and operational orchestration. Context layers for physical assets. End-to-end deployment platforms that treat automation as a service, not a product.

None of these require building the robot. All of them are more defensible than the robot.

We’re looking for founders who see physical AI as a systems problem, not a hardware problem. Who understand workflows and operations, not just models and sensors. Who think in hierarchies: what is the scenario, what are the skills, what are the tasks, what are the trajectories, and how does learning flow back up through all of them.

If you’re building in this layer, or working in physical environments where you’ve felt the deployment gap firsthand, we’d genuinely like to talk.

About Motive Force: We back technical founders at the idea stage, before the category has a name. If that’s you, reach out.

About Christian Dahlen: angel investor with investments in robotics and industrial software and a Ph. D. in engineering. Select investments include Wandelbots, deltia.ai, and SDA.